How Thousands of Personal Conversations Appeared in Google Search Results

A brief OpenAI experiment exposed intimate user conversations to public search engines, sparking urgent questions about AI privacy and forcing immediate platform changes.

OpenAI swiftly removed a ChatGPT feature in early August 2025 after discovering that thousands of private user conversations had become searchable on Google, Bing, and other search engines. The incident revealed deeply personal discussions about mental health, business strategies, relationship problems, and confidential work matters—all accessible to anyone with basic search skills.

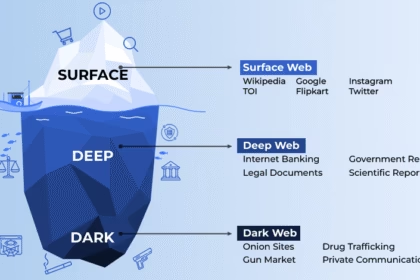

The privacy breach occurred through ChatGPT’s “share” feature, which allowed users to create public links to their conversations. When users enabled an option to “make this chat discoverable,” their exchanges became indexed by search engines like any other webpage. However, many users either misunderstood this setting or failed to grasp its full implications, inadvertently making sensitive information publicly available.

How the Privacy Incident Unfolded

The problem surfaced when users discovered they could search “site:chatgpt.com/share” on Google to access thousands of strangers’ conversations with the AI assistant. Investigations revealed over 4,500 indexed conversations, many containing personally identifiable information including names, locations, work details, and intimate personal struggles.

What Made This Particularly Concerning:

- Users shared addiction struggles, trauma experiences, and mental health challenges

- Business professionals inadvertently exposed proprietary strategies and client information

- Some conversations included enough specific details to potentially identify individuals

- The feature required multiple steps to activate, but unclear interface design led to accidental sharing

Dane Stuckey, OpenAI’s chief information security officer, announced the feature’s removal on social media, describing it as “a short-lived experiment to help people discover useful conversations.” The company acknowledged that the feature “introduced too many opportunities for folks to accidentally share things they didn’t intend to.”

The Technical Reality Behind the Breach

OpenAI emphasized that only conversations explicitly marked as “discoverable” could appear in search results—not all shared links. The process required users to:

- Click the “Share” button on a conversation

- Create a public link

- Check a box labeled “make this chat discoverable”

- Ignore warnings about web search visibility

Despite these safeguards, the design proved inadequate. Users either rushed through the process or misinterpreted the checkbox as necessary for basic sharing functionality. The incident highlighted a critical gap between technical consent mechanisms and genuine user understanding.

Immediate Industry Response and Implications

OpenAI’s rapid response—removing the feature within hours of widespread attention—demonstrated how quickly privacy violations can escalate in the social media age. The company is now working with Google and other search engines to remove indexed content, though some conversations may remain temporarily cached.

This incident reflects a broader pattern in AI development:

- Google’s Bard faced similar issues when conversations appeared in search results

- Meta’s AI chatbot also experienced problems with unintended public sharing

- Consumer AI products often launch with limited privacy controls, addressing gaps only after public backlash

The breach underscores a fundamental tension in AI development: companies want to leverage collective user intelligence while protecting individual privacy. However, striking this balance requires more sophisticated approaches than simple opt-in checkboxes.

The Privacy Divide: Consumer vs. Enterprise Protection

The incident exposed a troubling two-tier privacy system in AI services. While consumer ChatGPT users had their conversations accidentally indexed, enterprise customers enjoyed robust protections that prevented such exposure.

Enterprise users receive:

- Guaranteed data isolation from search engines

- No use of conversations for model training

- Advanced access controls and audit capabilities

- Legal protections through comprehensive data processing agreements

Consumer users face:

- Limited privacy controls with confusing interfaces

- Potential use of conversations for AI training unless opted out

- Greater vulnerability to accidental data exposure

- Indefinite data retention policies that conflict with regulations like GDPR

This disparity means that paying enterprise customers essentially receive privacy privileges that individual users cannot access, regardless of their own privacy needs.

Essential Protection Strategies for AI Users

Immediate Actions to Take:

- Review your ChatGPT settings: Visit Settings > Data controls to check shared links and delete unwanted ones

- Disable chat history: Turn off “Chat History & Training” to prevent future conversations from being used for model improvement

- Use temporary chat mode: Enable this feature for sensitive discussions that won’t be saved

- Search for your content: Use “site:chatgpt.com/share [your name/company]” to check if any of your information appears in search results

Long-term Privacy Practices:

- Limit personal information sharing: Never include Social Security numbers, full addresses, financial details, or confidential business information

- Use aliases and generic examples: Replace real names and identifying details with fictional alternatives when seeking advice

- Consider enterprise versions: For professional use, invest in business-grade AI tools that offer stronger privacy protections

- Stay informed about AI privacy policies: Regularly review the data practices of AI tools you use, as these policies frequently change

What This Means for the Future of AI Privacy

This incident serves as a critical wake-up call for both AI developers and users. As AI tools become more integrated into our personal and professional lives, the stakes for privacy failures continue to rise. Companies that prioritize user privacy from the design stage—rather than addressing problems reactively—will likely gain competitive advantages.

Key lessons for the industry:

- Default privacy settings matter: Features that could expose sensitive information should require explicit, informed consent with clear warnings

- User interface design is crucial: Even technically secure processes can lead to privacy breaches if users don’t understand the implications

- Rapid response capabilities are essential: OpenAI’s quick action likely prevented more severe consequences

For users, this incident demonstrates that AI conversations should be treated with the same caution as confidential documents. While these tools feel conversational and private, they operate in complex digital infrastructures where data can be exposed through design flaws, security breaches, or policy changes.

As AI continues reshaping how we work and communicate, maintaining user trust isn’t just desirable—it’s essential for the industry’s long-term success. The companies that learn from incidents like this and implement genuinely user-centric privacy protections will ultimately lead the responsible development of artificial intelligence.

The ChatGPT indexing incident may have been brief, but its implications for AI privacy standards will likely influence platform design and user behavior for years to come.